I Built an AI Product for Infrastructure PMs in 6 Days. Ask These 5 Questions Before You Do.

Infrastructure Catalyst

Issue #7 | February 24, 2026

In February, Anthropic and Cerebral Valley hosted Built with Opus 4.6, a global virtual hackathon marking one year since Claude Code launched. Over 13,000 people applied for 500 spots, putting the acceptance rate under 4%, roughly on par with Y Combinator. I was one of the 500, and I'm not a software developer or a startup founder. I'm a practicing Professional Engineer and Project Management Professional who spends most of his week managing roadway rehabs and condition surveys.

Each participant received $500 in Claude API credits and access to Opus 4.6, Anthropic's most capable model with a 1 million token context window. The tool behind it all, Claude Code, is an AI coding agent that runs in your terminal and builds alongside you. You describe what you want. It writes the code, creates the files, debugs the errors. It doesn't autocomplete lines. It architects entire features and refactors across dozens of files at once. After six days of building with it, I can say it's the most capable development tool I've ever used.

What surprised me most wasn't the technology. It was the people. The hackathon Discord was full of builders, and most weren't traditional developers. Designers, operators, domain experts from healthcare, finance, education, and one civil engineer from California. The common thread wasn't coding skill. It was knowing a problem deeply enough to direct the AI toward a real solution.

What 6 Days Produced

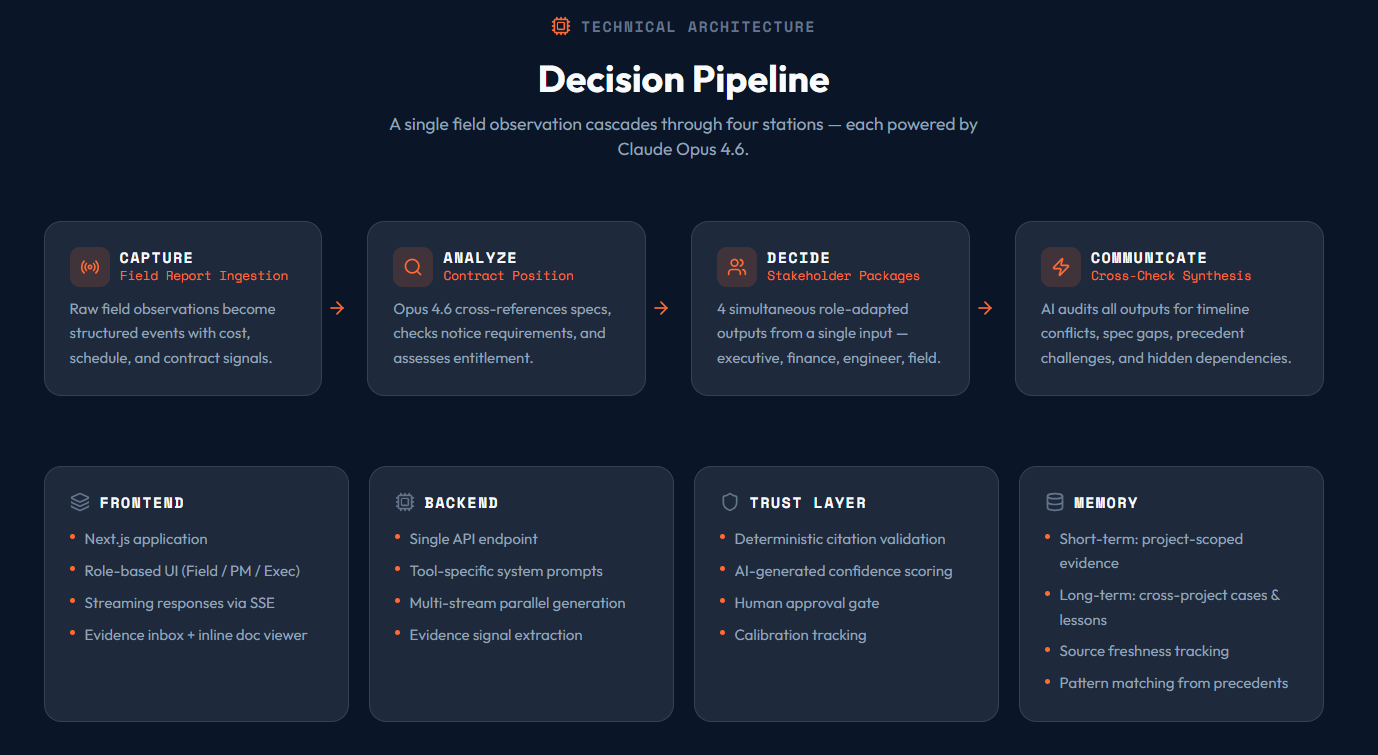

I built ICelerate, a decision intelligence tool for infrastructure PMs. It takes the data we already generate (field observations, contracts, specs, RFIs) and produces role-adapted, contract-aware outputs. The hero feature: type in one field condition, and four panels stream simultaneously with separate analyses for the Director, CFO, Engineer, and Contractor.

The final product: 43,632 lines of TypeScript across 226 files. Five core tools. Export to PowerPoint, Excel, PDF. Deployed live on the web. I made every architecture decision. Claude Code built it.

What the AI Couldn't Do for Me

The code came fast. The problems came faster.

Opus 4.6 would sometimes report high confidence when a significant portion of claims had no supporting evidence. The initial build had zero prompt injection protection. Responsive layouts broke on real iPhones despite looking fine in browser tools, requiring three rounds of fixes across 37 files.

These aren't failures of Claude Code. They're problems that require an engineer's judgment. Claude Code builds what you tell it to build. It doesn't question whether the architecture is secure, whether the AI's confidence should be trusted, or whether breakpoints hold on actual hardware. That's still your job.

The shipped product has no authentication. All project data sits in unencrypted browser storage. Every contract clause passes through a third-party API. Building it took six days. Making it safe enough for a real project? Different timeline entirely.

5 Questions Before You Build

Every infrastructure PM with technical curiosity is going to face this question soon: build a custom AI tool, or use what exists? Here's the checklist I'd run first.

1. Does this product already exist? Construction AI tools like Procore Copilot, Alice Technologies, and nPlan are in the market. I built ICelerate because nothing combined decision packaging with contract-aware field reporting the way I needed. But if your pain point is covered by a product with a security team and SOC 2 compliance, building your own is almost always the wrong call.

2. What data are you sending to the AI? Every ICelerate query sends field observations, contract documents, and project history to Anthropic's API. "We send your $50M contract to a third-party API" is a conversation you need to have before writing a single line of code.

3. Can you demo the value in under 60 seconds? ICelerate's Decision Package is a 15-second demo. The confidence scoring system I spent hours building is invisible to most users. Build the thing people can see working first.

4. Who verifies the AI outputs? The AI generates contract references, cost estimates, and schedule impacts. If those are wrong, a PM makes a bad decision on a real project. You need a plan for when the AI gets it wrong.

5. What does each user cost you? Each API call costs real money. At scale, AI inference cost can exceed your hosting cost. Know your unit economics before you set pricing.

Quick Win

Before you build, fill in this sentence: "The existing product I'd use instead is _____, and the specific reason it doesn't work for me is _____." If you can't complete both blanks, you don't need to build.

What I Took Away

Tools like Claude Code with Opus 4.6 have made it possible for a civil engineer to prototype products that would have required a development team two years ago. The builders I met during the hackathon proved this isn't a fluke.

But "can build" and "should deploy" are different conversations. The security gaps, the verification problems, the ongoing costs: none of that disappears because the code was easy to write. The same rigor we apply to the structures we design needs to apply to the tools we build around them.

Six days with Claude Code taught me that the most important skill isn't prompting. It's knowing when to build, when to buy, and when to stop.

Joseph Dib, PE, PMP